How Sony Pictures Imageworks cracked the look with Katana and Mari

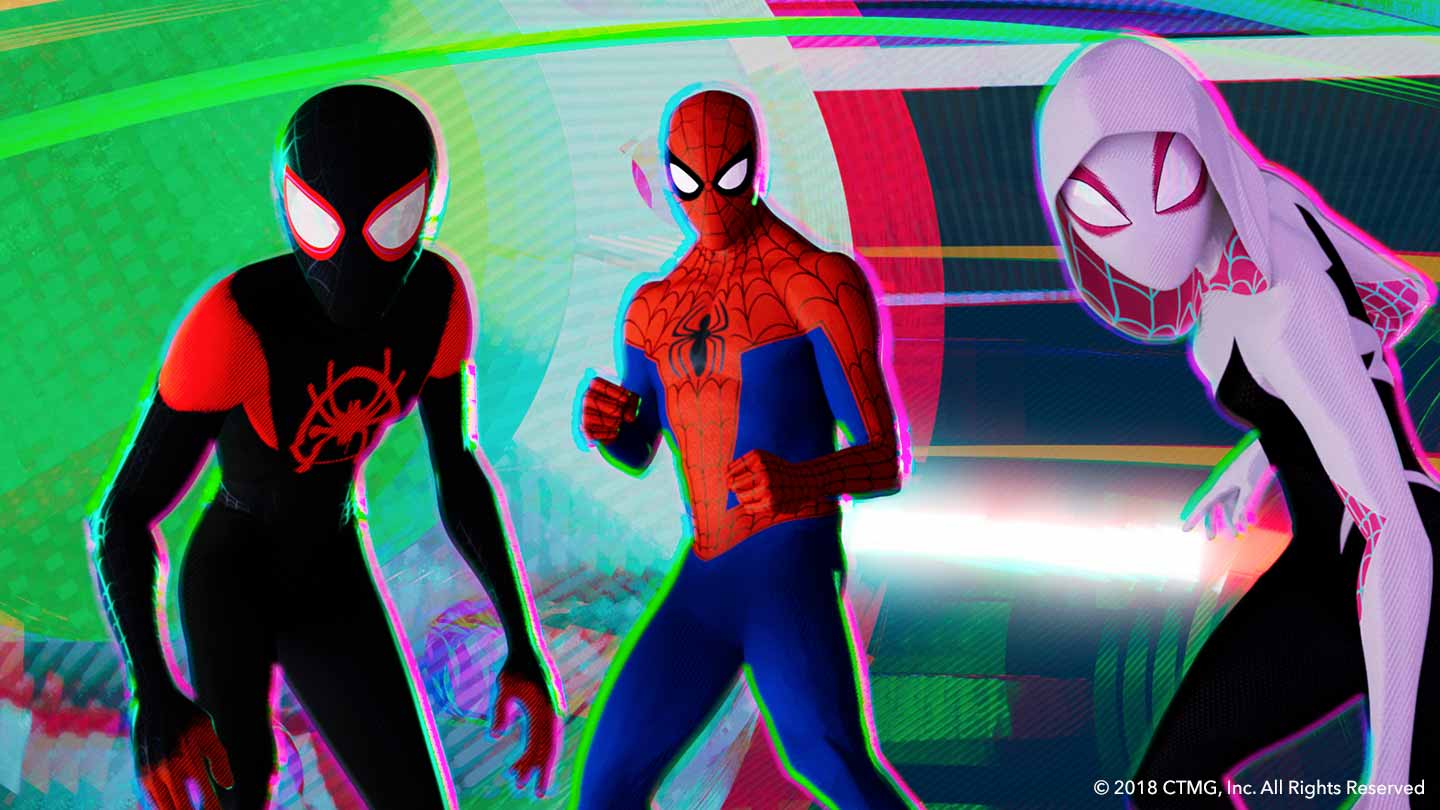

The directors of Spider-Man: Into the Spider-Verse had a clear idea of what they were trying to achieve.

Envisioning a film couched in the visual language of how comic books are made, the look of Spider-Man is informed by ideas going all the way back to silk-screening and the printing-press. It fell to the VFX crew at Sony Pictures Imageworks (SPI) to make this vision a reality.

We caught up with key members of the look development and texturing teams to find out how they harnessed the power of Katana and Mari on the Oscar-winning production.

Achieving a unique, stylized look

Given the unique vision for the film, it’s no surprise that lookdev sups like Bret St. Clair spent a lot of their time problem solving, as he explains: “There was this understanding that I was going to be involved in addressing challenges.

"Of course, on this show, the stylized look was clearly the unique problem, and that is where I landed.

"The problems of stylization often forced us to adopt entirely new ways of working. We spent much of our time, especially in the early stages, far from the beaten path. It’s about as far as I’ve been from a ‘normal’ production since the wild west days when I worked on my first shows."

SPI used Katana (software that the studio originally developed and have long used) and Arnold as their primary lighting and rendering tools for the project.

Using Katana, Bret spent a huge amount of his time mocking up networks to generate custom passes for the idiosyncratic looks they were reaching for. He explains:

“Katana was really useful when we needed to test ideas. And on this show, we needed to test every idea. We didn’t want to leave a stone unturned. One night I literally ran 480 concurrent tests exploring every possible combination of shader settings for our invisibility effect.

"I know that sounds a bit extreme. The problem was that the effect had to ripple and yet feel like invisibility (as opposed to say refraction, or cut paper fluttering), it had to have depth and yet the effect had to somehow convey something that told you it was rooted in our graphic style and related to Miles specifically.

"And then there were the story requirements. There was this question of whether the effect should break his contours. The question of how these dots generate themselves. Of what they do to the surrounding texture — push? Pull? Absorb?

"There was dot scale. Density. There was the animation of the reveal. The whole effect was animated in the shader. There were quite a few ideas to boil down into shader parameters, and certainly it was nothing I expected to get right without understanding how this shader was going to animate.

"But the style was always adding this extra dimension of complexity when resolving any look, and our directors wanted to get it just right.

"So I built a crazy shader and then built a super-custom shotgun of a rendering tool to test it. The shader took a couple of days. The shotgun took a couple of hours. And then I fired it a couple of other times over the next three days (though I did pair it down a bit as we learned what worked). But it did work. We found our target.

"I used the same sort of approach when I was working on the anime background effects for Peni. We had this geometry which was all pretty self-similar.

"But the ref showed all this crazy variation in brush strokes, that seemed to imply we’d need to create all these different geometry styles.

"There were washes, and fat strokes, and wiggly lines and spatters…. I didn’t want to bug effects for all this stuff since it wasn’t even clear what the geometry would need to look like. So I decided to hack together these shaders that would reshape the geometry so that I could at least test the ideas.

"So again, I was initially testing with maybe twenty passes (and lots of AOVs) to find the sorts of pieces I needed. But when I arrived in the morning, I had everything I needed to nail the look. And I had a machine already built to generate the requisite passes. I handed it off and I never heard another word.

"And that’s the thing. Ultimately the results of any lookdev work were bound to be handed off. Because we often had characters and sets and crazy effects that generally had completely different lighting and processing requirements living side-by-side in our world, it was a tremendous amount of complexity to manage.

"I’d say that’s where Katana really came in handy. It made it easy to share things like that. Even incredibly complex things.”

Less a lighting scene than a lighting machine

As Bret recalls, adopting Katana had benefits for lighting the scenes in Spider-Man across the board. He says: “On the atomic scale it made things easier because I was able to easily convert a process into a macro.”

Macros are nodes in Katana that wrap any single node, or group of nodes, and publish them so that their state is saved and they can be recalled elsewhere. Macros wrap functionality, enabling you to hide or group logic, so that you can then re-use the macros multiple times.

They’re a great way to ‘template’ a process for fast and easy use in the future.

On the broader scale, this enabled the team to deal with all their conflicting ideas and rendering requirements as objects. “We knew that if we picked up the macro for this character, the character would pretty much know how to light, no matter what crazy world we dropped it into”, explains Bret.

Those hours spent testing and building shaders paid off later down the line. “In Katana, you invest a bit more effort in setting things up the right way, but the payoff is that the result is less a lighting scene than a lighting machine," says Bret. "And a machine you can tinker with. It’s modular. It’s easy to debug. And it’s easy to build some fairly nifty tools.”

What’s more, Bret is clear that no other tool would have been up to the job “Unless tools have advanced dramatically since last I looked, it wouldn’t have happened," he says. "And if it had, it would have been a nightmare to manage.

"The advantage of Katana is that it gave us tools to manage complexity. Complexity was the main problem I saw on this show. Of any show I’ve worked on, I’d say this was probably the one where Katana gave us the most benefit.”

Managing huge complexity and myriad variations

To illustrate this last point, Bret points to the end battle sequence in the collider as a great example of the way Katana helped the team out.

“We had all of our major characters with all of their custom look requirements living together in one massive template," he explains. "Along with that, we had the collider environment itself.

"Then there were buildings, trains, cars and all sorts of other props that were appearing through the portal. And if that wasn’t enough, there were the dozens of elements that went into making the collider effects.

"Each of these assets carried its own lookdev. Some assets carried multiple variations. For many, they were glitching between looks. Often the effects that we were generating might be some new thing we’d invented that wasn’t published with the lookdev for the asset, so there were places where we were just jamming ideas together, but knowing that they would work.

"To be clear, we only started seeing the first publishes for those sequences a couple of months before delivery. So that’s when we first started exploring the look of those sequences. And there were some pretty extreme looks in them.”

Using Nuke and Katana together

Bret goes on to give a fascinating insight in the way the team used Katana and Nuke together on the project. “Speaking for myself, Katana was the testbed for many of the ideas I wanted to try," he says. "It was the default home for any new look or effect I created. But we didn’t want to keep everything in Katana.

"We had to deliver this show, and we were often getting very specific (and style-specific) notes concerning everything from where dots should lie on a characters face on a given frame to the way brush patterns should move.

"And, if it all lived in the render, those weren’t the sorts of changes that we could expect to turn around more than once a day. Nearly every tool I built for this show was prototyped in Katana because it was easy, because I had access to whatever data I needed, and because we knew the result would be something we could pipeline.

"But our goal was always to push those things into Nuke — to find a way to package up the information we’d need in some clever AOV so that we could turn those tools interactive.

"It was all about time and allowing the artists to work more like artists. Or better yet, like designers and art directors. If a tool could just be a slider, that’s what it was. I’ll not tell you it was always easy for artists, or that we always got it right.

"We were pounding on these problems right up until the end, so workflows were always shifting and changing. But we hit the notes, and we’re proud of the things our artists were able to achieve.”

Ultimately, the team harnessed the combined power of Katana and Nuke to hammer out all of the rendering looks that were created for the film, Bret says: “We were able to do that and achieve such varied results because the tools are robust and they allowed us to work the way we needed.

"Between our tools and the tools that ship with these packages, there was very little development we required that artists couldn’t handle themselves. Considering the amount of innovation this show demanded, I think that’s pretty remarkable.”

Texturing on an industrial scale with Mari

Nuke and Katana weren’t the only Foundry tools used on Spider-Man: Into the Spider-Verse. Mari was also an integral part of the team’s texture pipeline.

Offering flexibility in combining procedurals, paint and adjustment layers — as well as their own in-house tool sets — Mari made the difficult challenge of surfacing hundreds of assets far more manageable, and easier to iterate on.

Edwin Fabros, texture paint supervisor at SPI, explains: “Mari was the main painting and surfacing package used on the film.

"We used it to paint and texture the majority of our characters, environments, and props.

"Other paint packages were also used to allow us to take advantage of their particular features, but then we would ingest those back into Mari to complete the final look of the textures.”

Mari has been integrated into SPI’s texture pipeline for some time now, thanks to its hardworking technicians and software developers. Because of this, the team were armed from the get-go with a set of in-house tools and a robust texturing workflow that was a key part of achieving the look of the film.

Like the look development and lighting departments, Edwin’s team worked hard to nail the very specific vision the directors had. He explains: “The biggest challenge was finding the right balance of creating the perfect look for a moving comic book film.

"There were many departments collaborating together to find the perfect recipe for how each of the disciplines were used to arrive at the final look. This meant that for the texture team, there were a lot of iterations that needed to be addressed and turned over fairly quickly.

"Mari really allowed us to do that, as we were able to easily manage details in layers within each of the channels.”

Harnessing the power of UDIM workflows

One of Mari’s core strengths lies in its support for UDIM workflows, allowing artists to work with hundreds of high-resolution texture maps as though they were a single tile, using projection to paint across several maps in a single stroke.

Edwin recalls that the team made good use of these workflows on the project. “One of the things that Mari allows us to do that is more challenging in other paint packages is paint across UDIMs," he says.

"We also take advantage of features like tiling, and used various projection tools (like axis and tri-planar) to quickly mask and isolate different features of the characters and environments.

"Using a combination of these things allowed us to quickly rough in textures and surfaces throughout the project.”

He concludes by noting that Mari has left a lasting impression on the team, saying: “Mari definitely had a significant impact in our surfacing department's ability to proficiently texture paint the immense volume of assets necessary to create this distinctive and beautiful animated feature.

"It's one of the best paint packages we've ever used.”

Check out the second article in our two-part series to find out more about how Sony Pictures Imageworks created the signature graphic look of Spider-Man: Into the Spider-Verse almost entirely in comp.

Want to experience the power of Katana and Mari for yourself? Get a free trial of Katana here and a free trial of Mari here.