What’s on the horizon for machine learning in 2023?

Although machine learning (ML) is not new to the visual effects (VFX) industry, it still remains a hot topic.

We previously updated you on the latest ML advancements in 2020, and several years on, machine learning is now part of the mainstream. Everyone, regardless of their age or knowledge of new technologies, wants to know all about ChatGPT. It has truly captured the public attention — with some suggesting 2023 as the year that machine learning changes the world, while others are giving more specific predictions for this year.

These last few years have been transformative for machine learning, AI, and the opportunities they bring. In our previous update, we covered just three topics — now we could fill a whole book. But, as much as we want to, we won't. Instead, we've highlighted the three machine learning topics you don't want to miss.

Read on to find out about the top machine learning technologies that will have an impact on the Media and Entertainment industry...

Fewer years, more technique

Making any skincare guru or plastic surgeon jealous, de-aging technology has made significant advancements. With classic examples including Will Smith in Gemini Man and Robert de Niro in The Irishman, it seems like everyone is going through digital plastic surgery. Techniques have now been perfected and artists are making the most of AI face-swapping tools. In The Book of Boba Fett, ILM managed to create a very detailed youthful Skywalker thanks to its digital facial animation techniques and machine learning. With AI and machine learning techniques making big steps towards becoming the norm, de-aging is still finding its place into production in a justified way. Done well, de-aging presents a real opportunity for storytellers to enhance their creativity. Of course, there’s still the conventional way — flashbacks of characters sporting different hairstyles and clothes that were trendy at that time. But are we buying it? Is it credible and are clothes, style, and make-up enough for us to believe the storyteller? Probably not. So that’s where machine learning comes in, making de-aging cheaper and more accessible, elevating the project, and proving credible to the viewers.

An impressive example of de-aging was displayed in Series 4 of Stranger Things where technology combined with expert artistry to de-age the main character, Eleven, played by Millie Bobby Brown. The Stranger Things VFX team used a mix of live action — featuring child actor, Martie Blair, with similar proportions as a younger Millie Bobby Brown — and compositing a Millie's de-aged face onto that of the younger actress. By combining projection, 3D modeling, and elements of the plate they shot with the child actor, they created a realistic eight-year-old Eleven. The de-aging of Eleven was done by Lola VFX, with the studio admitting it wasn’t an easy task — it’s not just a deepfake, something that wouldn’t have worked in this context anyway, it’s a special technique developed by the studio so that the expressions are visualized as accurately as possible (VFX Supervisor Michael Maher Jr). This only proves again that machine learning techniques alone aren’t enough if they’re not adapted and merged with the experience and technical ability of a VFX artist.

Explosion of third-party models

Machine learning has many applications and possibilities — so why not make it more accessible? With more open source frameworks such as PyTorch becoming available, the sky's the limit and there’s constant interest in reaching it. Since March 2018, there’s been exponential growth in new machine learning repositories — including more than 38,000 new PyTorch models. However, despite the abundance of existing implementations that show extensive potential, the use of these models presents several challenges for VFX and animation studios. One of these challenges is the technical expertise of a researcher, engineer or TD which is often needed in order to get these models up and running. What's more, integrating a machine learning model into the artists’ pipeline is an elaborate process and, if successfully integrated, it also needs to have an artist-friendly interface to give users enough flexibility for an efficient creative process.

To solve these challenges, our R&D team introduced Cattery — a free library of third-party models running natively in Nuke. Designed to bridge the gap between research and post-production, Cattery enables artists to take advantage of these open source machine learning models, allowing for the use of them in their own pipelines. In the Nuke 13 series, we introduced CopyCat, a plug-in that enables artists to train neural networks in order to create custom effects for their own image-based tasks. CopyCat was designed to tackle complex or time-consuming effects such as creating a garbage matte. Cattery, released in Nuke 14.0, is a natural extension and enables sharing of popular models.

Creating machine learning tools to help artists save time and empower them to focus on creativity has always been a priority for Foundry. That’s why our R&D teams are experimenting with SmartROTO. This project explores whether it's possible for machine learning to interactively assist roto artists without disrupting established workflows, enabling them to accelerate basic work and free up more time for creativity.

What’s up with generative AI?

We left the elephant in the hypothetical room for last — perhaps the biggest trend which has reached fever pitch over the past year. Generative AI tools such as ChatGPT, Midjourney, Stable Diffusion, and GitHub Copilot have certainly drawn attention — and it’s no surprise. Not only do they attract curious minds with their capabilities, but there’s also an interest from a legal standpoint. Currently, Stable Diffusion’s parent company, Stability AI, is facing two lawsuits regarding copyright infringement. By training its system with scraped images and artworks from the web, artists argue they “were neither asked nor compensated for the rights to use their art in this way.” (Business Insider, 2023). The verdict will set a precedent for generative AI in general. A loss for Stability would mean restrictive consequences on gathering of training material. If they win, it would underscore the new world of copyright in the AI era.

Whatever the conclusion, this topic is worth discussing from both an ethical and philosophical point of view. Do these tools enable anyone to be a writer or a visual artist? Will there still be a delimitation between skillful, talented artists, and those who just learn to intelligently use machine learning? The answer doesn’t have to go to the extremes when it comes to the VFX industry specifically. Ben Kent, Research Engineering Manager at Foundry, comments: “I don't think the people necessarily using these tools in our industry are thinking of using it for production, so much as for quick proofs of concept and ideation. For example, storyboarding or just concept art. They’d allow artists to iterate on concept art really quickly and get to the final idea sooner.”

This is the future

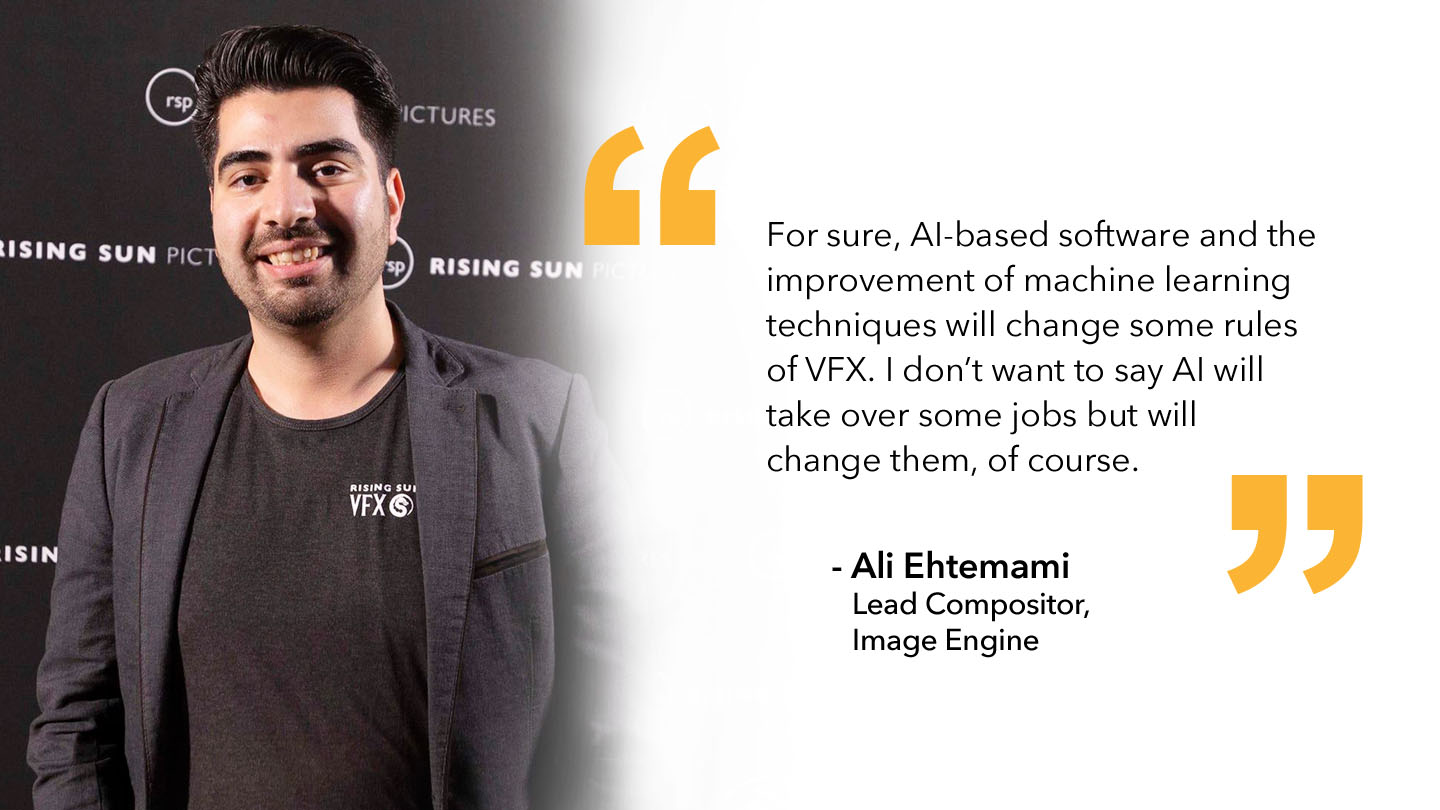

It's clear that machine learning will play an unavoidable role in the future in the VFX industry, not to replace artists but to empower them and accelerate their workflows, leaving more time for creativity. Our work with CopyCat, Cattery, and continued SmartROTO project is estament to that. Ali Ehtemami, Lead Compositor for feature films and episodic shows at Image Engine, comments:

“For sure, AI-based software and the improvement of machine learning techniques will change some rules of VFX. I don’t want to say AI will take over some jobs but will change them, of course.”

Artists are already looking to adapt and are showing interest in this trend. It’s our mission to continue monitoring upcoming trends and advancements, so that we can put all of our R&D efforts into bringing you the most innovative tools in line with industry standards. From machine learning and asset-centric workflows to virtual production, we’re paving the way for workflows of the future by testing, developing, and solving the challenges of tomorrow.

Want to get faster results by adding machine learning to your workflow?

Sign up for our free CopyCat Masterclass.