Connecting workflows and removing pipeline friction with interoperability

For those who work in the visual effects (VFX) industry, maximizing workflow efficiency is often one of the main priorities. But with technology advancing rapidly, increasingly tightening deadlines and demand for content rising, efficiency is not always easy to come by.

Interoperability and connected pipelines offer a solution to this problem, and with the rise of new production workflows and standards from USD to color management, there are new opportunities rising to create more meaningful interoperability between DCCs and stages of production. That’s why Foundry is continually evolving our tools to make the most of rising technology, ensuring that artists continue to have the most efficient and customizable pipeline possible.

We’re heightening our interoperability efforts between our software and third parties, with the aim to minimize friction between artists and departments across the pipeline, giving them the efficient workflow they desire.

In this article, we take a look at some of the innovative ways we’re extending our tools, and how you can create a more efficient and connected pipeline.

Katana and Nuke: connecting lighting and compositing

Interoperability is one of the buzzwords currently making the rounds in the VFX industry. It stems from the need of connecting pipelines together, enabling artists to seamlessly move between different departments and see everything that is happening on a project in context.

At Foundry, this is something we’re firmly committed to bringing into our products and is why we’ve introduced interoperability between Katana and Nuke in Katana 5.0, due to be released later this year. The power and scalability of Katana combined with the image processing of Nuke enables artists to visualize the final result from scratch. Any changes made in Katana automatically update in Nuke, empowering 3D lighting and 2D compositing to work in parallel, well informed of each other's choices.

Many of the visuals for an animation project come from the final comp that’s done in Nuke, so being able to see these directly in Katana means artists know what they are getting is accurate. Plus, VFX artists working with a plate and rendered elements from Katana will be able to see them in a full comp environment, not just side-by-side.

The Katana to Nuke interoperability marks the start of new updates coming to Foundry products, all with the aim of removing pipeline friction and maximizing workflow efficiency. To learn more about this new feature and the other advancements happening in Katana 5.0, watch our recent Foundry Live talk where the Look Development and Lighting teams offer you inclusive insight into the future of Katana and Mari.

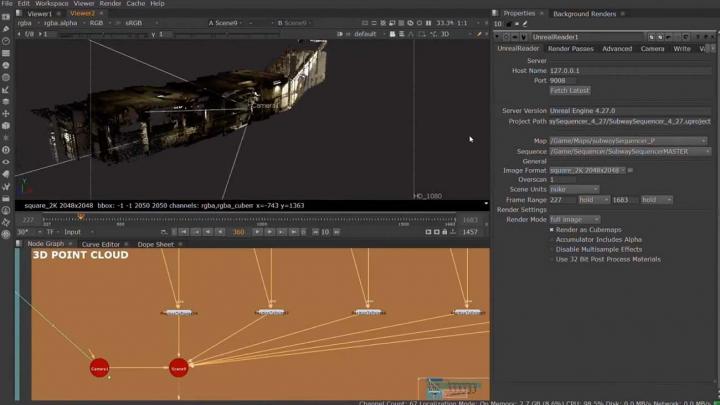

The UnrealReader Node in NukeX and Nuke Studio

Game engines and virtual production have become big players in the VFX industry, and as the technology continues to develop, so too do our tools. In the past, there have been problems around integrating these new technologies into pipelines, which is why Foundry’s Research team has devoted their efforts to combat these and find a way to accelerate artists encountering these problems.

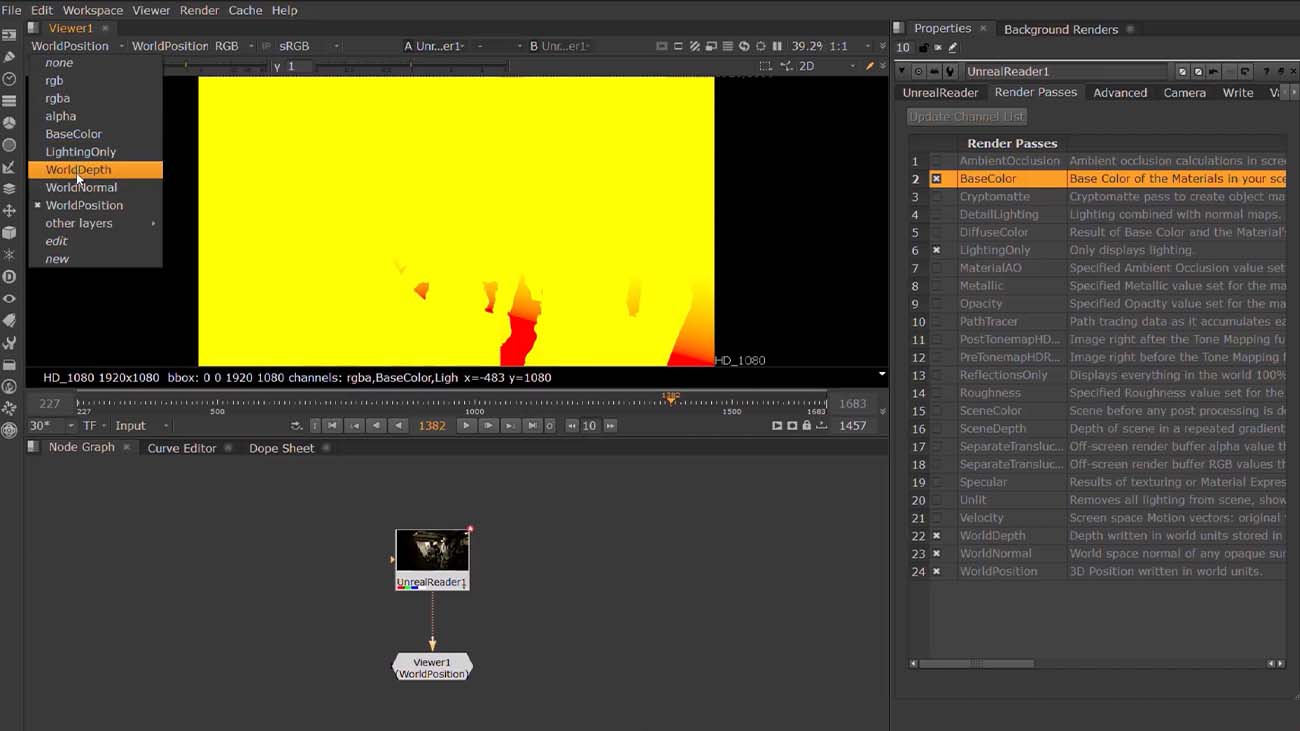

Our upcoming Nuke release sees the introduction of the UnrealReader Node for NukeX and Nuke Studio. This enables artists to be creative in their compositing when using both Unreal and Nuke. What’s more, as game engines are being used more in animation and VFX, they provide less nuanced data than artists are used to form a standard offline renderer. The UnrealReader node solves this by making it easier to request image data and utility passes from the Unreal Engine, directly from NukeX.

This enables artists to see all the latest Unreal changes directly in their Nuke script while they refine the image using Nuke’s powerful compositing toolset. While the Unreal Reader streamlines the pipeline between Unreal and Nuke, it’s also an artist tool. This means artists can easily request the AOVs and stencil layers directly from Nuke, providing them with maximum control in an environment they are comfortable with.

These developments pave the way for smoother and more powerful workflows for those who use both Nuke and Unreal together in a pipeline, removing pipeline friction and bringing the power of Nuke into real-time projects—something the Nuke team discussed in depth at our recent Foundry Live event.

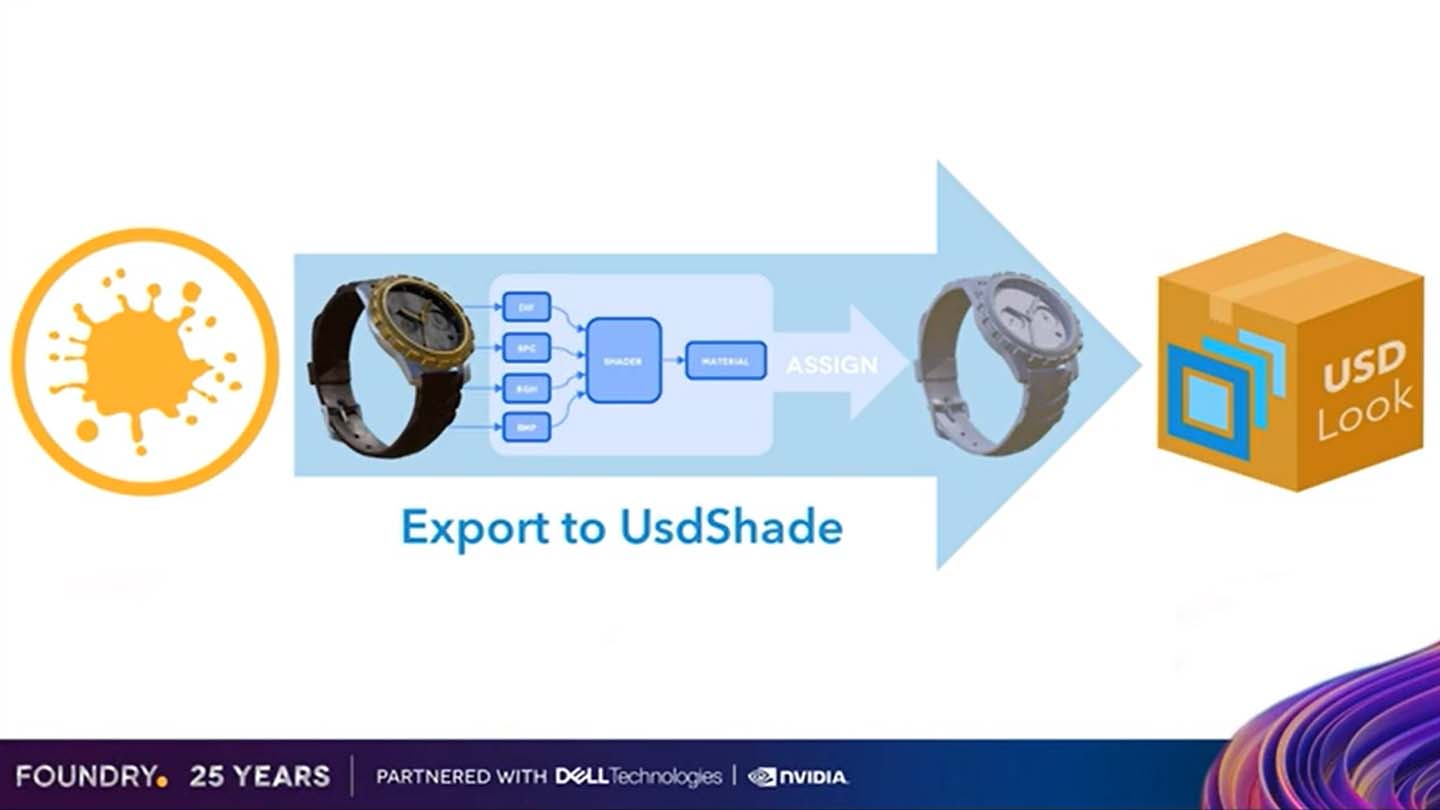

USD and Mari

Another major player in the technology game is USD. It has proved to be an important means of data across media and entertainment products and offers the opportunity to add consistency and efficiency between tools. To ensure this in our products, the next Mari release will see the inclusion of a USD Preview Look Export.

With the continued advancements happening in viewport technology, like the physically-based rendering viewport Hydra Storm found in Katana and Nuke, studios are able to view the characters, props and environments in their VFX scenes with better textures, reflections and lighting. To achieve a richer appearance, shading artists can take texture maps exported from Mari and plug them into the new USD Preview Shader, creating a flattened shader.

As Mari texture artists already work with an approximation shader like the one used in Katana, this enables Mari to automate these shading tasks and create the flat preview shader for Katana.

This new development not only allows artists to develop preview looks for an asset earlier in their pipeline, but also gives them a greater preview of assets authored in Mari within DDC Hydra viewers like Katana and Nuke.

At Foundry we’re committed to continually advancing alongside the rapid development of USD, and Mari’s USD Preview look export is testament to that. We’re also ensuring that artist workflows stay consistent, so OpenColorIOv2 will also be supported by both Mari and Nuke in the upcoming releases.

OCIOv2 is quickly becoming the standard for color management across the industry and brings with it long-awaited consistency between CPU and GPU for color processing. This means GPUs can now be trusted to perform accurate color transforms ensuring the artist can see accurate and consistent color.

To find out more and uncover the rest of the features coming soon to Mari, watch the Look Development and Lighting talk at Foundry Live.

Uncover a more efficient pipeline

Interoperability is just one part of Foundry’s wider commitment to removing pipeline friction for artists and creating streamlined workflows. Our Research team has also been investing time in cloud, AI, real-time, Machine Learning and more.

An example of this work comes in the form of the ML toolset found inside of Nuke. In Nuke 13.1, we are planning to expand on this with features like adding the ability to use existing image-to-image PyTorch networks inside the NukeX Inference node, expanding the number of models that artists can work within Nuke.

Another example is the work the Research team has been doing with cloud, and the foundation they’ve been building to make it easier and more practical to use. To find out more about this, and all the other work we’ve been doing watch our Research and Innovation Showcase. Mathieu Mathieu Mazerolle, Foundry’s Director of Product for New Technologies, is joined by Ari Rubenstein, VFX Supervisor, Matt Jacobs from Technicolor, Freelance Senior In-House Artist Shahin Toosi and Senior Compositor Indrajeet Sisodiya from Pixomondo, as they discuss Foundry’s advances in AI, real-time, and cloud can be applied to creative workflows.

The Research and Product teams at Foundry are excited to get these upcoming feature improvements into the hands of artists, so you can advance your workflow and continue creating breathtaking projects on a more efficient pipeline.